Many of us start each day with a long to-do list, a new set of goals and a commitment not to repeat the same mistakes we have in the past. It’s likely that we will have promised ourselves to stop putting things off. On our hit list of the foibles we most want to dispose of, procrastination will be somewhere near the top. The problem is that because procrastination is linked to psychological factors such as an innate preference to do something we deem pleasurable to something we don’t, modern life encourages us to do it.

Many of us start each day with a long to-do list, a new set of goals and a commitment not to repeat the same mistakes we have in the past. It’s likely that we will have promised ourselves to stop putting things off. On our hit list of the foibles we most want to dispose of, procrastination will be somewhere near the top. The problem is that because procrastination is linked to psychological factors such as an innate preference to do something we deem pleasurable to something we don’t, modern life encourages us to do it.

Procrastination is becoming more of a problem as we surround ourselves with a growing number of distractions. “Why”, we might ask ourselves, “should we write that 900 word feature right now when we could be taking the dog for a walk in the sunshine, watching just one more episode of Game of Thrones or making ourselves yet one more cup of coffee, daydreaming, checking email and messages or inviting a digital affirmation of our wisdom, attractiveness, exciting life or hilarity from somebody on social media?”

So it’s no wonder that this is the golden age of procrastination according to Dr Piers Steel who knows a thing or two about it and has shared his thoughts, research and proposed solutions in a book called The Procrastination Equation.

[perfectpullquote align=”right” bordertop=”false” cite=”” link=”” color=”” class=”” size=””]People remember uncompleted or interrupted tasks better than completed tasks[/perfectpullquote]

Nearly everybody (around 95 percent) procrastinates at some time or other, he claims. One in four people describe themselves as chronic procrastinators and over half the population would describe themselves as frequent. In the last 40 years there’s been about a 300-400 percent growth in what he considers chronic procrastination. It’s no coincidence that this increase is linked to the digitisation of the world. Dr Steel’s book offers some guidance on how to overcome procrastination but there’s also – obviously and ironically – an app for that too.

Procrastination may be endemic but it’s nothing new and nor is it always a bad thing. We know for example how creative thoughts often come unbidden when our minds are doing something else. There is also something called the Zeigarnik effect, based on the work of a researcher and psychologist Bluma Zeigarnik which states that people remember uncompleted or interrupted tasks better than completed tasks. That has obvious implications for learning because it suggests that taking a break during a period of study will help you to remember things better.

Slaves to the rhythm

There’s a lot to be said for not being slaves to the clock and the screen. Ironically, the way we measure time has its roots in a famous instance of daydreaming. The story goes that in 1583 a young student at the University of Pisa called Galileo Galilei was daydreaming in the pews while his fellow students were dutifully reciting their prayers. He noticed that one of the altar lamps was swaying back and forth and even as its energy dissipated, the arc of each swing slowed so that each took the same amount of time as the last, measured against his own pulse.

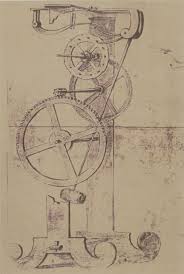

He packed the idea away and returned to it later in life in around 1602 when he built a pendulum to test whether he was right in concluding that what determines the time taken for it to swing is solely its length. What he found was that “the marvellous property of the pendulum is that it makes all its vibrations, large or small, in equal time.”

He packed the idea away and returned to it later in life in around 1602 when he built a pendulum to test whether he was right in concluding that what determines the time taken for it to swing is solely its length. What he found was that “the marvellous property of the pendulum is that it makes all its vibrations, large or small, in equal time.”

This was groundbreaking stuff for the period. Mechanical clocks existed but had to be reset daily by checking them against a sundial. This was OK for the time, when deadlines and timekeeping were not dependent on seconds, but the idea had been sown that it was possible to keep time mechanically with almost perfect precision.

Timekeeping only became a preoccupation during the Industrial Revolution when it became important for the new generation of trains to run on time and to measure the working hours and productivity of the workforce. It’s fair to say that there began the co-dependent relationship between timekeeping and industrialised work. One was not possible without the other. Before the 18th Century there was no real idea of the working day and hourly or daily pay. It was all about tasks.

Lost in time

It’s something to bear in mind because it might appear that what we think of as a feature of modern working life, is largely a return to the way things have always been. It could well be that history will view the working cultures of the past 250 years as the aberration.

Our whole attitude to time began to change and by the age of the Victorians had hardened into what we essentially still perceive. Charles Dickens described it in Hard Times as that “deadly statistical clock which measured every second with a beat like a rap upon a coffin lid.” Galileo’s ideas about the regularity of their timekeeping ensured that pendulums would be the most accurate way for us to measure time right up until the 1930s and the dawn of the technological and nuclear age.

There is one way in which the modern world is very different however. We now measure computing power against time, by how many operations a processor can perform in a set period. We also know, thanks to Moore’s Law that this power doubles approximately every 18 months and has been doing so for half a century.

The problem is that this is the new benchmark we have set ourselves for our own lives. The author Charles Handy encapsulated the thinking behind it twenty or so years ago when he described it as half the people doing twice the work in half the time. That remains the goal but it is one with constantly moving goal posts and we would do well to remember that sometimes we need to succumb to our baser instincts and human needs and those include the desire to stare, dream, pause and put things off.

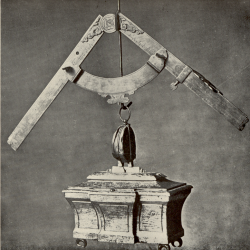

Image: Galileo’s Lodestone and Compass By Charles Singer [Public domain], via Wikimedia Commons

July 30, 2020

The golden age of procrastination and the tyranny of time keeping 0

by Mark Eltringham • Comment, Features, Wellbeing, Workplace

Procrastination is becoming more of a problem as we surround ourselves with a growing number of distractions. “Why”, we might ask ourselves, “should we write that 900 word feature right now when we could be taking the dog for a walk in the sunshine, watching just one more episode of Game of Thrones or making ourselves yet one more cup of coffee, daydreaming, checking email and messages or inviting a digital affirmation of our wisdom, attractiveness, exciting life or hilarity from somebody on social media?”

So it’s no wonder that this is the golden age of procrastination according to Dr Piers Steel who knows a thing or two about it and has shared his thoughts, research and proposed solutions in a book called The Procrastination Equation.

[perfectpullquote align=”right” bordertop=”false” cite=”” link=”” color=”” class=”” size=””]People remember uncompleted or interrupted tasks better than completed tasks[/perfectpullquote]

Nearly everybody (around 95 percent) procrastinates at some time or other, he claims. One in four people describe themselves as chronic procrastinators and over half the population would describe themselves as frequent. In the last 40 years there’s been about a 300-400 percent growth in what he considers chronic procrastination. It’s no coincidence that this increase is linked to the digitisation of the world. Dr Steel’s book offers some guidance on how to overcome procrastination but there’s also – obviously and ironically – an app for that too.

Procrastination may be endemic but it’s nothing new and nor is it always a bad thing. We know for example how creative thoughts often come unbidden when our minds are doing something else. There is also something called the Zeigarnik effect, based on the work of a researcher and psychologist Bluma Zeigarnik which states that people remember uncompleted or interrupted tasks better than completed tasks. That has obvious implications for learning because it suggests that taking a break during a period of study will help you to remember things better.

Slaves to the rhythm

There’s a lot to be said for not being slaves to the clock and the screen. Ironically, the way we measure time has its roots in a famous instance of daydreaming. The story goes that in 1583 a young student at the University of Pisa called Galileo Galilei was daydreaming in the pews while his fellow students were dutifully reciting their prayers. He noticed that one of the altar lamps was swaying back and forth and even as its energy dissipated, the arc of each swing slowed so that each took the same amount of time as the last, measured against his own pulse.

This was groundbreaking stuff for the period. Mechanical clocks existed but had to be reset daily by checking them against a sundial. This was OK for the time, when deadlines and timekeeping were not dependent on seconds, but the idea had been sown that it was possible to keep time mechanically with almost perfect precision.

Timekeeping only became a preoccupation during the Industrial Revolution when it became important for the new generation of trains to run on time and to measure the working hours and productivity of the workforce. It’s fair to say that there began the co-dependent relationship between timekeeping and industrialised work. One was not possible without the other. Before the 18th Century there was no real idea of the working day and hourly or daily pay. It was all about tasks.

Lost in time

It’s something to bear in mind because it might appear that what we think of as a feature of modern working life, is largely a return to the way things have always been. It could well be that history will view the working cultures of the past 250 years as the aberration.

Our whole attitude to time began to change and by the age of the Victorians had hardened into what we essentially still perceive. Charles Dickens described it in Hard Times as that “deadly statistical clock which measured every second with a beat like a rap upon a coffin lid.” Galileo’s ideas about the regularity of their timekeeping ensured that pendulums would be the most accurate way for us to measure time right up until the 1930s and the dawn of the technological and nuclear age.

There is one way in which the modern world is very different however. We now measure computing power against time, by how many operations a processor can perform in a set period. We also know, thanks to Moore’s Law that this power doubles approximately every 18 months and has been doing so for half a century.

The problem is that this is the new benchmark we have set ourselves for our own lives. The author Charles Handy encapsulated the thinking behind it twenty or so years ago when he described it as half the people doing twice the work in half the time. That remains the goal but it is one with constantly moving goal posts and we would do well to remember that sometimes we need to succumb to our baser instincts and human needs and those include the desire to stare, dream, pause and put things off.

Image: Galileo’s Lodestone and Compass By Charles Singer [Public domain], via Wikimedia Commons